27th June Algorithm Update

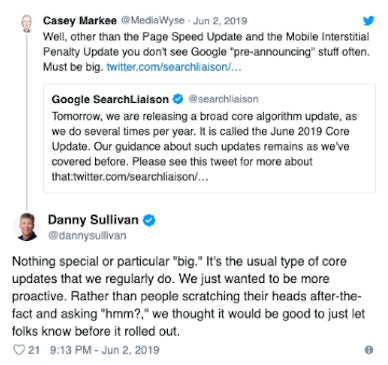

There has been some chatter within the industry of a potential Google search algorithm update on June 27th. However, as Google pre-announced June’s broad algorithm update and will carry on notifying site owners and SEOs of any major updates to be more proactive.

Due to Google usually releasing small changes to the algorithm each day to improve the search results, mostly unnoticeable, it is unlikely that this fluctuation being discussed is a broad algorithm update focusing on one key area.

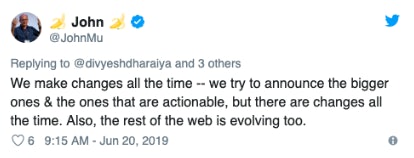

19th June Algorithm Update

This possible algorithm talk was also mirrored earlier on in the month around June 19th. The chatter seemed to be relatively random amongst industries and with an average of 10-15% decrease in traffic drop off. Many have hypothesized that an update may be in the pipeline with tweaks in traffic being recognised – one to watch out for.

Search Industry Updates

Robots.txt Protocol

During the beginning of July, Google announced after 25 years of being in use and adopted by over 500 million websites, the robots.txt protocol is now working toward becoming an internet standard. The Robots Exclusion Protocol (REP) has been regarded as one of the most fundamental and critical components on the internet, allowing crawlers to access the site in full or only partially.

As this has never been turned into an official statement, developers have subsequently interpreted this protocol differently over the years. To create a unified approach, helping website owners and developers to create great user experiences across the internet and how to effectively submit to the IETF (Internet Engineering Task Force).

This is an important step that should be flagged with developers who parse robots.txt files. It is not crucial to parse at least the first 500 kibibytes of a robots.txt, which can help to define how long a file size is, alleviating unnecessary strain on servers.

If you’re unsure whether this affects your site, get in touch with the team or have a read of the Google’s Developer guidelines for further information.

Listing Restricting on the SERPs

On June 6, Google announced that they were updating the number of listings from the same site in the top search results.

The new change now means that it is highly unlikely that a user will find more than one result from the same website for the same search term. Google also announced that it would broadly consider subdomains as part of the root domain, except in cases where they are treated as separate sites for diversity purposes.

This is an important development, as it gives smaller businesses a greater chance of ranking on SERPs traditionally dominated by larger websites, such as Yelp or Quora.

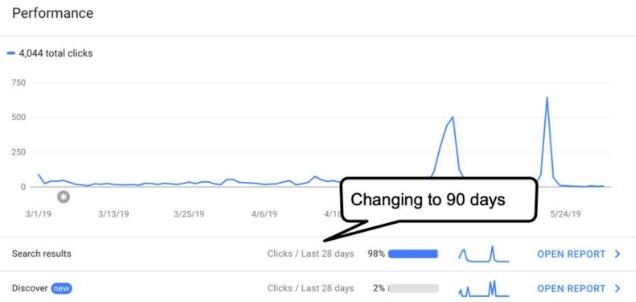

Google Search Console Display Data

On June 12, Google announced that Search Console would now display data from search clicks and Google Discover over the past 90 days.

Search Console had previously only shown data from the previous 28 days. While this was a relatively minor industry update, the news was still welcomed by SEOs, many of whom now had a better overview of their site’s performance.

Google Taking Action on Large Websites

In a webmaster hangouts session held on 1 July, John Mueller revealed that Google employees had discussed possibly taking action against large websites leasing out their subdomains to third-parties. While restraining from calling the practice ‘spam’, Mueller did acknowledge that negative feedback had called the practice into question and that the search engine giant was searching for a solution.

He suggested that perhaps “the right approach is to find a way to figure out… what is the primary topic of this website and focus more on that and then kind of leave these other things on the side.” He did, however, say that there was “unlikely to be a simple answer that could be applied to every website”.

Have we missed anything out? Let us know in the comments.

Read further algorithmic updates from the team, or contact the team today.