According to James Murray from Bing at the Ecommercial Conference 2016, the world has gone mad.

We have, as marketers and retailers, created things that we simply don’t need, just because it’s possible (see iPotty and the IoT ‘moon cup’).

But, as he goes on to explain – is this crazy? Or is there a very human need?

He used Answer the Public to find out what people are searching for around the term ‘potty’, using the iPotty example. There are a whole number of people who are searching in their hundreds and thousands for terms like ‘how do I potty my son’, which may well have led to a perceived need for a product like a potty that integrates an iPad dock. Suddenly, this doesn’t seem to crazy and it’s responding to a very real need.

The moon cup raised $169,000 on Kickstarter within 2 weeks. What might be considered an unnecessary technology, becomes a product that answers a real problem.

So, as James says, we need to reflect on the way we respond to human needs.

The fundamental flaw with search

When most of us want to find something, we go to Google (or Bing – they have 23% market share in the UK now!). Here, the search box controls us.

We’ve been conditioned to search in a search engine friendly manner. When we want to find a holiday, we don’t use natural language, we use ‘holiday cheap Spain’. It’s not a natural human experience.

If we take a search for ‘weather’ as an example, here’s how we’ve searched:

- 20 years ago – we searched “weather”

- 10 years ago, we searched “london weather”

- Today, we can use voice search to ask things like “will I need an umbrella tomorrow”

James uses Cortana as his example, stating how she is able to cleverly associate keywords with natural language and deliver a result which meets the intent.

Machine learning has advanced hugely in recent years in terms of how well they can understand what you’re saying.

At the moment, voice search might seem small, but this, says James, is not a fad. 50% of search will be on voice by 2020, according to data from Comscore and Mary Meeker’s Internet Trends (kpcb.com/internettrends).

How voice search changes search behaviour

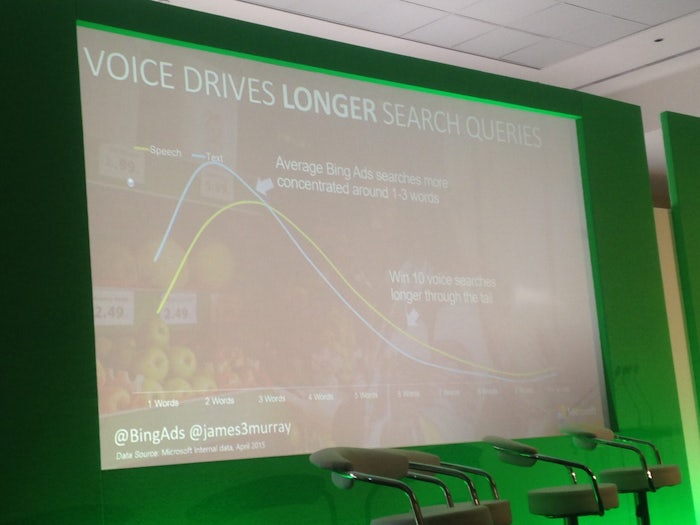

Voice searches tend to be much longer than typed searches.

This has various implications for online businesses, as James explains:

1) Use broad match

James recognises that broad search can seem like a crazy idea. But, as he explains, even the best marketer in the world can’t know every single thing people will search. You simply can’t capture all of that intent. Typically, advertisers are missing out on 50% of clicks on BingAds when not using broad match.

2) Target question keywords

Let’s say you were buying some Star Wars Lego. You might search:

a) Cheap Star Wars Lego

b) How much is Star Wars Lego?

The second option is more common in voice search. We speak more naturally and are more inclined to ask questions. Don’t ignore this.

3) Consider your prepositions

Typically, we ignore prepositions in exact match. Consider the difference:

a) How much is a Lego X wing

b) How much for a Lego X wing

You need to consider the many varied different ways we use prepositions when we search using our voice.

The future for voice search

James suggests that “voice search is going to become the new mobile”.

By this, he means it’s going to be the next topic of discussion in our industry. “The year of voice search” will slowly trickle through and become part of our normal behaviour. Get ahead of the game now and you can start learning, improving and building on your campaigns in a more voice focused manner.

Conversation as a platform

Something Bing is moving toward is this idea of conversation as a platform, whereby machines are able to understand what you’re talking about and interact with you accordingly.

Bing sees the future evolving around 3 key elements:

1) User

2) Bots

3) Super bots (digital assistant)

Beyond keywords

Beyond keywords, there’s a very exciting new development which is known as ‘visual search’.

This is where you can, for example, see a dress you like and send a photo of it to Cortana to find that dress for you.

There’s a team in Redmond, Seattle, tasked with cataloging every object in the world. And this isn’t easy.

Consider a ‘chair’ for example. A ‘chair’ doesn’t have ‘chairness’. It might be a seat with four legs. But it might have three legs, or one leg. It might have no ‘legs’. It might be deep seated, or it might be shallow. It might be backless. There are so many things it ‘might’ be, but we as humans know exactly what it is without needing to be able to use chairness rules to help us.

Project ‘Adam’ is a project by the Seattle team which is gathering information about objects. The example they started with was dogs, where people could submit photos of their dogs and say ‘this is my husky’, ‘this is my poodle’ and so on.

Go to Captionbot.ai and you can see this in action. The example James shared was a photo of him and his wife on his wedding day, cutting their cake. It’s a complex photo with lots going on. Cortana is able to recognise that it is a photo of a man holding a knife cutting a wedding cake.

Now, Cortana cannot use rules to understand this. It uses inferences. It sees a woman in a white dress and infers it’s a wedding day. It infers that a man and a woman standing together at a wedding with a large knife are, probably, cutting a wedding cake. And it uses that insight to assess what the photo shows.

The original mission of Microsoft was a computer on every desk in every home.

Their ambition today is to empower every person on the planet to do and achieve more.

That includes people from niche and minority groups, not just the early adopters. They’re focusing particularly on disability groups, like the blind.

He shared a video which showed how Bing’s bot capabilities are being used to help blind people. It’s an app that runs on smart phone and smart glasses, which tells you what is happening around you, using the image recognition technology.

Summary

This isn’t search as we usually think of it. It’s not 10 blue links on a screen.

“It’s still search. It’s just a little bit more human.”

Takeaways

The growth of Bing has really accelerated in recent years (months, even), with iOS using Bing more and more and Bing appearing as the default search engine across various devices today.

As digital marketers, we need to recognise this. Historically, we’ve looked predominantly at Google and assumed whatever Google does, other search engines will follow.

However, as the scope of technology continues to grow, so too do the opportunities for search engined. We’re starting to see more of a discrepancy in the way search engines are focusing their time. It was only a few weeks ago that Google announced it’s latest smartphone, Pixel, and a range of other hardware tools. Meanwhile, Bing is pushing its voice search more and more.

Now, that’s not to say Google isn’t investing in voice, as we know it is (indeed, Pixel includes Google’s latest voice assistant iteration, as reviewed here by Search Engine Land). But we need to look not only at what the search giant does, but at what technology is making possible across the board.

Practically speaking, as marketers we still need to get the basics right and appeal to text search. This won’t change over night. But we also need to be aware of the growth of voice search. In my opinion, areas for focus in the light of voice search will be:

- User centred marketing (’emotional experience’ is already coming in to overtake ‘user experience (UX)’)

- Brand positioning; have a clear view on what differentiates you from your competitors

- Content marketing; create engaging, deep content which appeals to much longer tail queries and natural language

- Basics of copywriting; ensure, as you always should do, that you utilise natural language and write for humans, not search engines

- Schema markup; making it easier for search engines to understand your pages and surface results accordingly, in text and voice

James Murray at PPC Hero Conference

James spoke at last week’s PPC Hero conference too. Take a look at Becky’s blog on his talk (a longer version of this one) for more detailed information.